GaOUG Tech Days Session

GaOUG Tech Days is just around the corner: May 9-10 to be exact. We have an incredible venue in Downtown Atlanta called the Loudermilk Center with exceptional event space throughout. We have a great collection of national speakers from the Oracle Community, so the locals in Atlanta can get a feel for larger, more national conferences without leaving the Dirty Dirty. Additionally, we have the hugely popular, always engaging, Oracle Master Product Manager Maria Colgan giving the keynote What to Expect from Oracle Database 12c.

The Loudermilk Center in Downtown Atlanta is the perfect venue for GaOUG Tech Days.

This blog post is a member of a “blog hop” from several of the GaOUG Tech Days speakers. A blog hop is similar to a webring (I’m dating myself here): a group of bloggers get together and orchestrate a series of posts that are published in unison with links between them. We tried to represent several streams running at Tech Days: Cloud, Database, Middleware, and Big Data. Just like my post here, each of the blog posts below will be talking about sessions, and why you should attend GaOUG Tech Days. Thanks to the speakers for all that they do, including helping us get the word out about the conference.

Bobby Curtis, Oracle, “Oracle GoldenGate 101”

Chris Lawless, Dbvisit, “Kafka for the Oracle DBA”

Danny Bryant, Accenture Enkitec, “REST Request and JSON with APEX Packages”

Eric Helmer, Mercury Technology Group, “Maintaining, Monitoring, Administering, and Patching Oracle EPM Systems”

Jim Czuprynski, OnX Enterprise Solutions, “DBA 3.0: Transform Yourself into a Cloud DBA, or Face a Stormy Future” and “Stop Guessing, Start Analyzing: New Analytic View Features in Oracle Database 12cR2”

Apache Kafka and Data Streaming

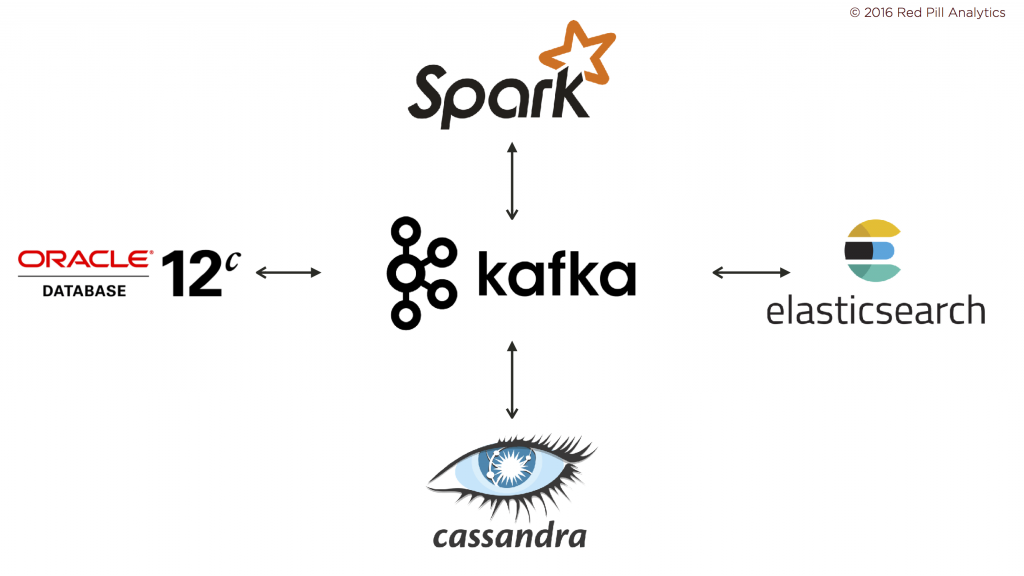

So you’d like me to tell you a little bit about my session? Sure. Of course. If you say so. As you can guess from the title, I’ll be talking about Apache Kafka. With new data management and data pipeline frameworks burrowing their way into the enterprise, Kafka has been a popular project lately because it provides a single point of ingestion for enterprise data regardless of their downstream implication. Traditionally, when building standard ETL processes, we would tightly couple the ingestion of data, the processing of data, and their downstream delivery: it’s all right there in the name: “extract, transform, load.” Kafka brings an elevated order to this chaos. It serves as the enterprise distributed commit log and enables us to ingest data without worrying about how we plan to use them.

Apache Kafka is a single point of Data Ingestion

Even though it wasn’t available when I submitted the abstract, Event Hub in the Oracle Cloud is now available, which is full-on Apache Kafka in the Cloud, and perhaps the first of the enterprise Cloud vendors to introduce this. There’s quite a bit of scaffolding around Event Hub to make it gel with the rest of the Oracle Cloud; we’ll take a look and see how “Kafka” it is, and whether this additional scaffolding bears fruit for developers and administrators.

Analytic Microservices

With Kafka as the backbone, I’ll prescribe a new way of thinking about data and analytics. Taking a beat from the modern practice of building applications as disparate collections of separate, connected applications, we’ll investigate this practice and see why it’s so appealing. We’ll explore how Apache Kafka enables the use of similar design techniques to deliver a cohesive analytics platform.

See us at GaOUG Tech Days

If you’re going to be in Atlanta on May 9 and 10, then register for GaOUG Tech Days and join us for a great couple of days. 2017 will be our best event to date for the GaOUG, including excellent content and a can’t-be-beat keynote speaker in Maria Colgan. If you want to chat with me directly about Tech Days, then reach out of me and let me know how I can help. We look forward to seeing you in Atlanta in May.

6 Responses to GaOUG Tech Days Session